Complex Systems Design and Operation

Complex Systems Design and Operation

Our faculty members tackle a diverse set of problem-driven research questions with real-world impact, including:

- How do you design a spacecraft quickly and well?

- How do you manage a disaster response to help the greatest number of people?

This research theme asks how to design and operate many types of complex systems. Our faculty study systems as diverse as spacecraft, energy, transportation, and the disaster response enterprise. Designing these systems -- selecting their functions and structure -- involves a major commitment of resources and locks in choices for years to come, so it is crucial to get those initial decisions right. Operating these systems -- choosing how to run them from day to day or year to year -- can lead to surprising innovations or fine-tuning to achieve major performance improvements, increase resiliency, or achieve other system properties.

Research Stories

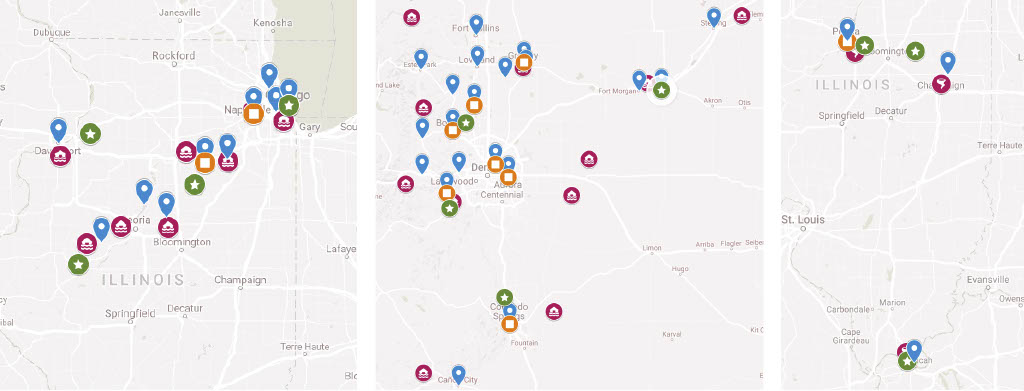

Where to set up Disaster Recovery Centers to best serve the affected population and minimize costs?

Maps of Chicago-area flooding (left), Colorado flooding (center), and Champaign-area tornadoes (right), showing affected counties (red disaster symbols), actual DRCs (blue pins), the DRCs placed by the jurisdiction model (orange squares), and the DRCs placed by the travel time model (green stars).

Disaster response is a challenging environment for data-driven decision support, because of its rarity, uniqueness, and high stakes. A study conducted by Professor Erica Gralla and colleagues from MIT and FEMA: 1) develops and demonstrates practical decision support approaches for an important problem faced by the U.S. Federal Emergency Management Agency (FEMA), 2) identifies challenges and successes during their partial implementation, and 3) explores how such approaches can encourage the adoption of data-driven decision-making in crisis situations. Specifically, this article develops approaches for locating and staffing temporary Disaster Recovery Centers (DRCs), which provide in-person service to disaster-affected communities. Working with FEMA, Professor Gralla’s team developed two models that aim to improve service to survivors and minimize costs. One model fits easily into FEMA’s current decision-making process, while the other further improves service by challenging some extant assumptions. By testing the models using data from three past disasters, Professor Gralla’s team found that this decision support can result in cost savings of 75% on average by eliminating unnecessary DRCs and over-staffing and, at the same time, maintain or reduce the maximum travel time required for the disaster-affected population to access DRCs. Aspects of the models have already been used during disaster operations. FEMA’s experience highlights the potential for data- and model-driven analyses to improve resource allocation and demonstrates an approach to improve organizational decisions by developing models that either align with or challenge the decision-making culture. This work is published in Decision Sciences Journal and won the journal’s 2020 Best Paper Award.

Who in the “crowd” can solve my complex robotic design problem?

Freelancer.com landing page for the NASA Robotic Arm Challenge field experiment

Firms are increasingly using prize competitions—which involve broadcasting a problem to the “crowd” and awarding a pre-specified prize to the solver with the best solution—as a source of innovation. Yet, despite a history of famous success stories (e.g., the Netflix Prize) that hint at the enormous promise of the approach, there remains skepticism among engineering practitioners as to whether these “open” methods can be applied effectively to solve complex system design problems. At the heart of the issue is whether the “crowd”—and specifically the individual solvers therein—possess the capability to contribute high quality, feasible solutions, often enough, to make prizes a viable source of innovation. Additionally, managers lack guidance for when, and for what types of problems, those tools are most effective. Research and the associated field experiment, conducted by Professor Zoe Szajnfarber and a team of SzajnLab graduate students, add important evidence and insights to that discussion. In the past, prizes have typically been framed as a means to identify a single best solution, and systems engineers and managers have rightly been skeptical of whether the (assumed novice) crowd can contribute appropriate solutions to complex problems. By demonstrating that the crowd includes many contributors with within-discipline training, who can provide high-quality engineering analysis, the results open the door for another class of use: as a means to more quickly, cheaply, and completely explore the tradespace of solution alternatives. Rather than viewing prize competitions as one exotic tool in the toolkit, this paper provides a path to consider prizes as a flexible tool that can augment and or complement existing engineering design practices. An overview of these research results can be found in IEEE Transactions on Engineering Management. The students involved in this research included Lihui Zhang, Suparna Mukherjee, Jason Crusan, Anthony Hennig, and Ademir Vrolijk.

Faculty

Hernan Abeledo

Associate Professor

Learn More About Professor Abeledo

David Broniatowski

Associate Professor

Learn More About Professor Broniatowski

Erica Gralla

Associate Professor

Learn More About Professor Gralla

Enkundayo Shittu

Associate Professor

Learn More About Professor Shittu

Zoe Szajnfarber

Professor and Department Chair

Learn More About Professor Szajnfarber

Rene van Dorp

Professor

Learn More About Professor van Dorp